1.安装环境

这个比较简单,

1.1 安装cnetos7 这个版本中直接代有python2.7.5版本,(下载ISO安装包安装即可我用的是vmware12.5)

1.2 安装 tensorflow

安装pip

yum update -y && yum install -y python python-devel epel-release.noarch python-pip

使用pip安装tensorflow

pip install https://storage.googleapis.com/tensorflow/linux/cpu/tensorflow-0.5.0-cp27-none-linux_x86_64.whl

1.3 安装 python flaskapi

pip install flask(这个不记得了,不行就度娘吧)

1.5 下载MNIST训练库

mnist库

https://files.cnblogs.com/files/keim/train-images-idx3-ubyte.gz.rar 这个文件后缀Rar去掉

https://files.cnblogs.com/files/keim/MNIST_data1.rar 解压和上面的放一起即可

2.训练代码

如下是训练代码,其中mnist_data为上面的MNIST库的位置

#coding=utf-8 from tensorflow.examples.tutorials.mnist import input_data mnist = input_data.read_data_sets('MNIST_data', one_hot=True) import tensorflow as tf sess = tf.InteractiveSession() x = tf.placeholder(tf.float32, shape=[None, 784]) y_ = tf.placeholder(tf.float32, shape=[None, 10]) W = tf.Variable(tf.zeros([784,10])) b = tf.Variable(tf.zeros([10])) sess.run(tf.global_variables_initializer()) y = tf.matmul(x,W) + b cross_entropy = tf.reduce_mean( tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y)) train_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy) for _ in range(1000): batch = mnist.train.next_batch(100) train_step.run(feed_dict={x: batch[0], y_: batch[1]}) correct_prediction = tf.equal(tf.argmax(y,1), tf.argmax(y_,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) print(accuracy.eval(feed_dict={x: mnist.test.images, y_: mnist.test.labels})) def weight_variable(shape): initial = tf.truncated_normal(shape, stddev=0.1) return tf.Variable(initial) def bias_variable(shape): initial = tf.constant(0.1, shape=shape) return tf.Variable(initial) def conv2d(x, W): return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME') def max_pool_2x2(x): return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') W_conv1 = weight_variable([5, 5, 1, 32]) b_conv1 = bias_variable([32]) x_image = tf.reshape(x, [-1,28,28,1]) h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) h_pool1 = max_pool_2x2(h_conv1) W_conv2 = weight_variable([5, 5, 32, 64]) b_conv2 = bias_variable([64]) h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) h_pool2 = max_pool_2x2(h_conv2) W_fc1 = weight_variable([7 * 7 * 64, 1024]) b_fc1 = bias_variable([1024]) h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64]) h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) keep_prob = tf.placeholder(tf.float32) h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob) W_fc2 = weight_variable([1024, 10]) b_fc2 = bias_variable([10]) y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2 cross_entropy = tf.reduce_mean( tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y_conv)) train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy) correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) saver = tf.train.Saver() # defaults to saving all variables sess.run(tf.global_variables_initializer()) for i in range(5000000): batch = mnist.train.next_batch(50) if i%100 == 0: train_accuracy = accuracy.eval(feed_dict={ x:batch[0], y_: batch[1], keep_prob: 1.0}) print("step %d, training accuracy %g"%(i, train_accuracy)) train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5}) writer=tf.summary.FileWriter("Scripts",tf.get_default_graph()) writer.close() print ('save file') saver.save(sess, 'learning_tensorflow/model.ckpt') #保存模型参数,注意把这里改为自己的路径 print ('save file ok') #print("test accuracy %g"%accuracy.eval(feed_dict={x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))

3.测试代码

#coding=utf-8 from PIL import Image, ImageFilter import tensorflow as tf #import matplotlib.pyplot as plt import cv2 def imageprepare(): """ This function returns the pixel values. The imput is a png file location. """ file_name='pic_data/3.png'#导入自己的图片地址 #in terminal 'mogrify -format png *.jpg' convert jpg to png im = Image.open(file_name).convert('L') #im.save("pic_data/sample.png") #plt.imshow(im) #plt.show() tv = list(im.getdata()) #get pixel values #normalize pixels to 0 and 1. 0 is pure white, 1 is pure black. tva = [ (255-x)*1.0/255.0 for x in tv] #print(tva) return tva # Define the model (same as when creating the model file) result=imageprepare() x = tf.placeholder(tf.float32, [None, 784]) W = tf.Variable(tf.zeros([784, 10])) b = tf.Variable(tf.zeros([10])) def weight_variable(shape): initial = tf.truncated_normal(shape, stddev=0.1) return tf.Variable(initial) def bias_variable(shape): initial = tf.constant(0.1, shape=shape) return tf.Variable(initial) def conv2d(x, W): return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME') def max_pool_2x2(x): return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') W_conv1 = weight_variable([5, 5, 1, 32]) b_conv1 = bias_variable([32]) x_image = tf.reshape(x, [-1,28,28,1]) h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) h_pool1 = max_pool_2x2(h_conv1) W_conv2 = weight_variable([5, 5, 32, 64]) b_conv2 = bias_variable([64]) h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) h_pool2 = max_pool_2x2(h_conv2) W_fc1 = weight_variable([7 * 7 * 64, 1024]) b_fc1 = bias_variable([1024]) h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64]) h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) keep_prob = tf.placeholder(tf.float32) h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob) W_fc2 = weight_variable([1024, 10]) b_fc2 = bias_variable([10]) y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2) init_op = tf.global_variables_initializer() saver = tf.train.Saver() with tf.Session() as sess: sess.run(init_op) saver.restore(sess, "learning_tensorflow/model.ckpt")#这里使用了之前保存的模型参数 #print ("Model restored.") prediction=tf.argmax(y_conv,1) predint=prediction.eval(feed_dict={x: [result],keep_prob: 1.0}, session=sess) print(h_conv2) print('recognize result:') print(predint[0])

4.结合API远程调用

接口代码:

# coding=UTF-8 from flask import Flask,jsonify,request,url_for from utils import QssClient as utl from utils import TensorClient as tcf import urllib import os app = Flask(__name__) foo = utl.QssClient() foo2 = tcf.TensorClient() @app.route('/') def api_root(): return 'Welcome' @app.route('/articles') def api_articles(): return 'List of ' + url_for('api_articles') @app.route('/articles/<articleid>') def api_article(articleid): return 'You are reading ' + articleid @app.route('/test1', methods=['GET', 'POST']) def test1(): resultCode='0' print (request.method) if request.method == 'POST': dic=request.form.to_dict() print(dic['img']) foo.baseConvert(dic['img']) resultCode=foo2.recognize("../pic_data/1.jpg", "../save_bp/lenet5.pb") #resultCode = '0' else: print(request.args.get('img')) resultCode = '0' return resultCode @app.route('/test', methods=['GET', 'POST']) def test(): resultCode='0' print (request.method) if request.method == 'POST': dic=request.form.to_dict() print(dic['img']) foo.baseConvert(dic['img']) resultCode=foo2.autoCheckImg() #resultCode = '0' else: print(request.args.get('img')) resultCode = '0' return resultCode if __name__ == '__main__': app.run(host = '0.0.0.0',port = 6001,debug = True)

工具类:

qssclient:

# coding=UTF-8 import sys import os,base64 import uuid import requests class QssClient(object): def __new__(cls, *args, **kw): if not hasattr(cls, '_instance'): orig = super(QssClient, cls) cls._instance = orig.__new__(cls, *args, **kw) return cls._instance def baseConvert(self,filedata): print ("write ok1") print filedata imgdata = base64.b64decode(filedata) file = open('../pic_data/1.jpg', 'wb') file.write(imgdata) print ("write ok2") file.close()

TensorClient.py:

#coding=utf-8 from PIL import Image, ImageFilter import tensorflow as tf import matplotlib as mpl mpl.use('Agg') import numpy as np import matplotlib.pyplot as plt #import matplotlib.pyplot as plt import cv2 from skimage import io, transform class TensorClient(object): def __new__(cls, *args, **kw): if not hasattr(cls, '_instance'): orig = super(TensorClient, cls) cls._instance = orig.__new__(cls, *args, **kw) return cls._instance def imageprepare(self): file_name = '../pic_data/1.jpg' # 导入自己的图片地址27 For 5000次训练,20000次以上可以达到99% # file_name = 'pic_data2/0.png' # 导入自己的图片地址 # in terminal 'mogrify -format png *.jpg' convert jpg to png im = Image.open(file_name).convert('L') im.save("../pic_data/sample.png") #plt.imshow(im) #plt.show() tv = list(im.getdata()) # get pixel values # normalize pixels to 0 and 1. 0 is pure white, 1 is pure black. tva = [(255 - x) * 1.0 / 255.0 for x in tv] # print(tva) return tva def weight_variable(self,shape): initial = tf.truncated_normal(shape, stddev=0.1) return tf.Variable(initial) def bias_variable(self,shape): initial = tf.constant(0.1, shape=shape) return tf.Variable(initial) def conv2d(self,x, W): return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME') def max_pool_2x2(self,x): return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') #此方法每次执行时要重起服务,不知为什么 def autoCheckImg(self): result = self.imageprepare() x = tf.placeholder(tf.float32, [None, 784]) #x = tf.placeholder(tf.float32, [1, 784]) W = tf.Variable(tf.zeros([784, 10])) b = tf.Variable(tf.zeros([10])) W_conv1 = self.weight_variable([5, 5, 1, 32]) b_conv1 = self.bias_variable([32]) x_image = tf.reshape(x, [-1, 28, 28, 1]) h_conv1 = tf.nn.relu(self.conv2d(x_image, W_conv1) + b_conv1) h_pool1 = self.max_pool_2x2(h_conv1) W_conv2 = self.weight_variable([5, 5, 32, 64]) b_conv2 = self.bias_variable([64]) h_conv2 = tf.nn.relu(self.conv2d(h_pool1, W_conv2) + b_conv2) h_pool2 =self. max_pool_2x2(h_conv2) W_fc1 = self.weight_variable([7 * 7 * 64, 1024]) b_fc1 = self.bias_variable([1024]) h_pool2_flat = tf.reshape(h_pool2, [-1, 7 * 7 * 64]) h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) keep_prob = tf.placeholder(tf.float32) h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob) W_fc2 = self.weight_variable([1024, 10]) b_fc2 = self.bias_variable([10]) y_conv = tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2) init_op = tf.global_variables_initializer() saver = tf.train.Saver() #saver = tf.train.import_meta_graph("../learning20000/model.ckpt.meta") checkRlt=0; with tf.Session() as sess: #旧方式 sess.run(init_op) saver.restore(sess, "../learning20000/model.ckpt") # 这里使用了之前保存的模型参数 #另一种方式 #saver.restore(sess, "../learning20000/model.ckpt") #sess.run(tf.get_default_graph().get_tensor_by_name("add:0")) prediction = tf.argmax(y_conv, 1) predint = prediction.eval(feed_dict={x: [result], keep_prob: 1.0}, session=sess) print(h_conv2) print('recognize result:') print(predint[0]) checkRlt=predint[0] return str(checkRlt) #这个方法识别率有问题 def recognize(self,img_path, pb_file_path): with tf.Graph().as_default(): output_graph_def = tf.GraphDef() with open(pb_file_path, "rb") as f: output_graph_def.ParseFromString(f.read()) _ = tf.import_graph_def(output_graph_def, name="") with tf.Session() as sess: init = tf.global_variables_initializer() sess.run(init) input_x = sess.graph.get_tensor_by_name("input:0") print(input_x) keep_prob = sess.graph.get_tensor_by_name("keep_prob:0") print(keep_prob) out_softmax = sess.graph.get_tensor_by_name("softmax:0") print(out_softmax) out_label = sess.graph.get_tensor_by_name("output:0") print(out_label) img = Image.open(img_path).convert('L') img = img.resize((28, 28)) arr = [] pixelmin = float(img.getpixel((0, 0))) pixelmax = float(img.getpixel((0, 0))) for i in range(28): for j in range(28): if pixelmin > float(img.getpixel((j, i))): pixelmin = float(img.getpixel((j, i))) if pixelmax < float(img.getpixel((j, i))): pixelmax = float(img.getpixel((j, i))) # print(pixelmin, pixelmax) for i in range(28): for j in range(28): pixel = (float(img.getpixel((j, i))) - pixelmin) / (pixelmax - pixelmin) arr.append(pixel) # print(arr) img_out_softmax = sess.run(out_softmax, feed_dict={input_x: np.reshape(arr, [-1, 784]), keep_prob: 1.0}) print("img_out_softmax:", img_out_softmax) prediction_labels = np.argmax(img_out_softmax, axis=1) print("label:", prediction_labels) return str(prediction_labels[0])

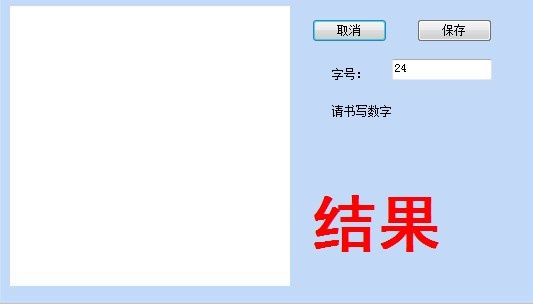

5.测试客户端

关键代码POST请求

public static string ImageHttpPost(string Url, string postDataStr) { try { //WriteLog(DateTime.Now + " 影像识别Url:" + Url + " postDataStr:" + postDataStr); postDataStr = postDataStr.Replace("+", "%2B"); HttpWebRequest request = (HttpWebRequest)WebRequest.Create(Url); request.Method = "POST"; request.Timeout = 10000; //request.UserAgent = "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.2; .NET CLR 4.0.30319;)"; request.ContentType = "application/x-www-form-urlencoded"; request.ContentLength = postDataStr.Length; //增加下面两个属性即可 //request.KeepAlive = false; //request.ProtocolVersion = HttpVersion.Version10; StreamWriter writer = new StreamWriter(request.GetRequestStream(), Encoding.ASCII); writer.Write(postDataStr); writer.Flush(); writer.Close(); writer.Dispose(); //ServicePointManager.SecurityProtocol = SecurityProtocolType.Tls; //ServicePointManager.SecurityProtocol = (SecurityProtocolType)3072; ServicePointManager.SecurityProtocol = SecurityProtocolType.Ssl3 | SecurityProtocolType.Tls; HttpWebResponse response = (HttpWebResponse)request.GetResponse(); string encoding = response.ContentEncoding; //if (encoding == null || encoding.Length < 1) //{ // encoding = "UTF-8"; //默认编码 //} Stream myResponseStream = response.GetResponseStream(); StreamReader myStreamReader = new StreamReader(myResponseStream, Encoding.GetEncoding("utf-8")); string retString = myStreamReader.ReadToEnd(); myStreamReader.Close(); myResponseStream.Close(); return retString; } catch (Exception ex) { Console.WriteLine(ex); return null; } }

图片生成base64:

/// <summary> /// 图片生成64 /// </summary> /// <param name="Imagefilename"></param> /// <returns></returns> protected string ImgToBase64String(string Imagefilename) { try { //生成base64 Bitmap bmp = new Bitmap(Imagefilename); MemoryStream ms = new MemoryStream(); bmp.Save(ms, System.Drawing.Imaging.ImageFormat.Jpeg); byte[] arr = new byte[ms.Length]; ms.Position = 0; ms.Read(arr, 0, (int)ms.Length); ms.Close(); return Convert.ToBase64String(arr); } catch (Exception ex) { return null; } }

请求API:

//MessageBox.Show("保存成功!"); var base64img = ImgToBase64String(filestring); // MessageBox.Show("图片准备成功!"); //post var value = ImageHttpPost("http://192.168.1.168:6001/test", "img=" + base64img); label3.Text = "识别结束"; if (value == null) { label2.Text = "未识别"; } else { label2.Text = value; }

这个是客户端功能是左则手写0~9后点击保存即可调用服务API进行识别

***************以上内容为本人开发测试后结果转载或引用请标注出处,谢谢***************************

本站文章如无特殊说明,均为本站原创,如若转载,请注明出处:Tensorflow训练识别手写数字0-9 - Python技术站

微信扫一扫

微信扫一扫  支付宝扫一扫

支付宝扫一扫