(ps:根据自己的理解,提炼了一下官方文档的内容,错误的地方希望大佬们多多指正。。。。。)

0x01:数据集的获取和表示

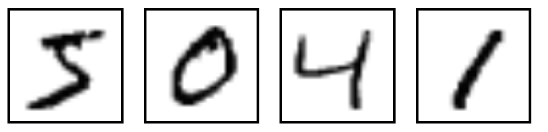

数据集的获取,可以通过代码自动下载。这里的数据就是各种手写数字图片和图片对应的标签(告诉我们这个数字是几,比如下面的是5,0,4,1)。

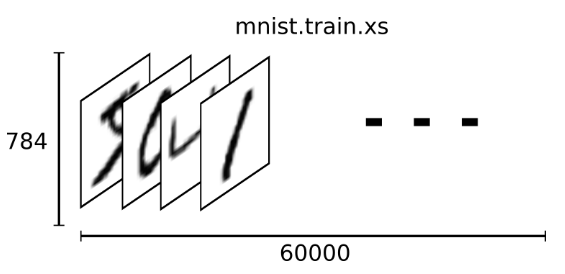

下载下来的数据集被分成两部分:60000行的训练数据集(mnist.train)和10000行的测试数据集(mnist.test),而每一个数据集都有两部分组成:一张包含手写数字的图片(xs)和一个对应的标签(ys)。训练数据集和测试数据集都包含xs和ys,比如训练数据集的图片是 mnist.train.images ,训练数据集的标签是 mnist.train.labels。根据图片像素点把图片展开为向量,再进一步操作,识别图片上的数值。那这60000个训练数据集是怎么表示的呢?在MNIST训练数据集中,mnist.train.images 是一个形状为 [60000, 784] 的张量(至于什么是张量,小伙伴们可以手都搜一下,加深一下印象),第一个维度数字用来索引图片,第二个维度数字用来索引每张图片中的像素点。在此张量里的每一个元素,都表示某张图片里的某个像素的强度值,值介于0和1之间。

0x02:代码运行

代码分为两部分,一个是用于下载数据的 input_data.py, 另一个是主程序 mnist.py,

1 # Copyright 2015 Google Inc. All Rights Reserved. 2 # 3 # Licensed under the Apache License, Version 2.0 (the "License"); 4 # you may not use this file except in compliance with the License. 5 # You may obtain a copy of the License at 6 # 7 # http://www.apache.org/licenses/LICENSE-2.0 8 # 9 # Unless required by applicable law or agreed to in writing, software 10 # distributed under the License is distributed on an "AS IS" BASIS, 11 # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. 12 # See the License for the specific language governing permissions and 13 # limitations under the License. 14 # ============================================================================== 15 """Functions for downloading and reading MNIST data.""" 16 from __future__ import absolute_import 17 from __future__ import division 18 from __future__ import print_function 19 import gzip 20 import os 21 import tensorflow.python.platform 22 import numpy 23 from six.moves import urllib 24 from six.moves import xrange # pylint: disable=redefined-builtin 25 import tensorflow as tf 26 SOURCE_URL = 'http://yann.lecun.com/exdb/mnist/' 27 def maybe_download(filename, work_directory): 28 """Download the data from Yann's website, unless it's already here.""" 29 if not os.path.exists(work_directory): 30 os.mkdir(work_directory) 31 filepath = os.path.join(work_directory, filename) 32 if not os.path.exists(filepath): 33 filepath, _ = urllib.request.urlretrieve(SOURCE_URL + filename, filepath) 34 statinfo = os.stat(filepath) 35 print('Successfully downloaded', filename, statinfo.st_size, 'bytes.') 36 return filepath 37 def _read32(bytestream): 38 dt = numpy.dtype(numpy.uint32).newbyteorder('>') 39 return numpy.frombuffer(bytestream.read(4), dtype=dt)[0] 40 def extract_images(filename): 41 """Extract the images into a 4D uint8 numpy array [index, y, x, depth].""" 42 print('Extracting', filename) 43 with gzip.open(filename) as bytestream: 44 magic = _read32(bytestream) 45 if magic != 2051: 46 raise ValueError( 47 'Invalid magic number %d in MNIST image file: %s' % 48 (magic, filename)) 49 num_images = _read32(bytestream) 50 rows = _read32(bytestream) 51 cols = _read32(bytestream) 52 buf = bytestream.read(rows * cols * num_images) 53 data = numpy.frombuffer(buf, dtype=numpy.uint8) 54 data = data.reshape(num_images, rows, cols, 1) 55 return data 56 def dense_to_one_hot(labels_dense, num_classes=10): 57 """Convert class labels from scalars to one-hot vectors.""" 58 num_labels = labels_dense.shape[0] 59 index_offset = numpy.arange(num_labels) * num_classes 60 labels_one_hot = numpy.zeros((num_labels, num_classes)) 61 labels_one_hot.flat[index_offset + labels_dense.ravel()] = 1 62 return labels_one_hot 63 def extract_labels(filename, one_hot=False): 64 """Extract the labels into a 1D uint8 numpy array [index].""" 65 print('Extracting', filename) 66 with gzip.open(filename) as bytestream: 67 magic = _read32(bytestream) 68 if magic != 2049: 69 raise ValueError( 70 'Invalid magic number %d in MNIST label file: %s' % 71 (magic, filename)) 72 num_items = _read32(bytestream) 73 buf = bytestream.read(num_items) 74 labels = numpy.frombuffer(buf, dtype=numpy.uint8) 75 if one_hot: 76 return dense_to_one_hot(labels) 77 return labels 78 class DataSet(object): 79 def __init__(self, images, labels, fake_data=False, one_hot=False, 80 dtype=tf.float32): 81 """Construct a DataSet. 82 one_hot arg is used only if fake_data is true. `dtype` can be either 83 `uint8` to leave the input as `[0, 255]`, or `float32` to rescale into 84 `[0, 1]`. 85 """ 86 dtype = tf.as_dtype(dtype).base_dtype 87 if dtype not in (tf.uint8, tf.float32): 88 raise TypeError('Invalid image dtype %r, expected uint8 or float32' % 89 dtype) 90 if fake_data: 91 self._num_examples = 10000 92 self.one_hot = one_hot 93 else: 94 assert images.shape[0] == labels.shape[0], ( 95 'images.shape: %s labels.shape: %s' % (images.shape, 96 labels.shape)) 97 self._num_examples = images.shape[0] 98 # Convert shape from [num examples, rows, columns, depth] 99 # to [num examples, rows*columns] (assuming depth == 1) 100 assert images.shape[3] == 1 101 images = images.reshape(images.shape[0], 102 images.shape[1] * images.shape[2]) 103 if dtype == tf.float32: 104 # Convert from [0, 255] -> [0.0, 1.0]. 105 images = images.astype(numpy.float32) 106 images = numpy.multiply(images, 1.0 / 255.0) 107 self._images = images 108 self._labels = labels 109 self._epochs_completed = 0 110 self._index_in_epoch = 0 111 @property 112 def images(self): 113 return self._images 114 @property 115 def labels(self): 116 return self._labels 117 @property 118 def num_examples(self): 119 return self._num_examples 120 @property 121 def epochs_completed(self): 122 return self._epochs_completed 123 def next_batch(self, batch_size, fake_data=False): 124 """Return the next `batch_size` examples from this data set.""" 125 if fake_data: 126 fake_image = [1] * 784 127 if self.one_hot: 128 fake_label = [1] + [0] * 9 129 else: 130 fake_label = 0 131 return [fake_image for _ in xrange(batch_size)], [ 132 fake_label for _ in xrange(batch_size)] 133 start = self._index_in_epoch 134 self._index_in_epoch += batch_size 135 if self._index_in_epoch > self._num_examples: 136 # Finished epoch 137 self._epochs_completed += 1 138 # Shuffle the data 139 perm = numpy.arange(self._num_examples) 140 numpy.random.shuffle(perm) 141 self._images = self._images[perm] 142 self._labels = self._labels[perm] 143 # Start next epoch 144 start = 0 145 self._index_in_epoch = batch_size 146 assert batch_size <= self._num_examples 147 end = self._index_in_epoch 148 return self._images[start:end], self._labels[start:end] 149 def read_data_sets(train_dir, fake_data=False, one_hot=False, dtype=tf.float32): 150 class DataSets(object): 151 pass 152 data_sets = DataSets() 153 if fake_data: 154 def fake(): 155 return DataSet([], [], fake_data=True, one_hot=one_hot, dtype=dtype) 156 data_sets.train = fake() 157 data_sets.validation = fake() 158 data_sets.test = fake() 159 return data_sets 160 TRAIN_IMAGES = 'train-images-idx3-ubyte.gz' 161 TRAIN_LABELS = 'train-labels-idx1-ubyte.gz' 162 TEST_IMAGES = 't10k-images-idx3-ubyte.gz' 163 TEST_LABELS = 't10k-labels-idx1-ubyte.gz' 164 VALIDATION_SIZE = 5000 165 local_file = maybe_download(TRAIN_IMAGES, train_dir) 166 train_images = extract_images(local_file) 167 local_file = maybe_download(TRAIN_LABELS, train_dir) 168 train_labels = extract_labels(local_file, one_hot=one_hot) 169 local_file = maybe_download(TEST_IMAGES, train_dir) 170 test_images = extract_images(local_file) 171 local_file = maybe_download(TEST_LABELS, train_dir) 172 test_labels = extract_labels(local_file, one_hot=one_hot) 173 validation_images = train_images[:VALIDATION_SIZE] 174 validation_labels = train_labels[:VALIDATION_SIZE] 175 train_images = train_images[VALIDATION_SIZE:] 176 train_labels = train_labels[VALIDATION_SIZE:] 177 data_sets.train = DataSet(train_images, train_labels, dtype=dtype) 178 data_sets.validation = DataSet(validation_images, validation_labels, 179 dtype=dtype) 180 data_sets.test = DataSet(test_images, test_labels, dtype=dtype) 181 return data_sets

input.py

本站文章如无特殊说明,均为本站原创,如若转载,请注明出处:tensorflow的MNIST教程 - Python技术站

微信扫一扫

微信扫一扫  支付宝扫一扫

支付宝扫一扫