http://stats.stackexchange.com/questions/145768/importance-of-local-response-normalization-in-cnn

caffe 解释:

The local response normalization layer performs a kind of “lateral inhibition” by normalizing over local input regions.双边抑制。看起来就像是激活函数

几种解释以上链接的几个答复,引用附上如上链接:

(1)是优化的计算更加快

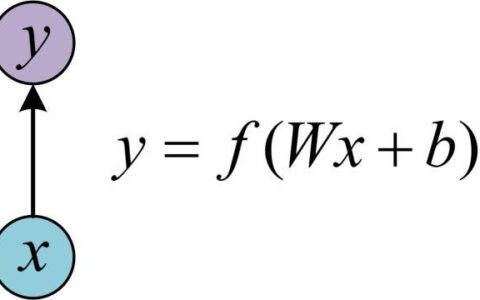

Here is my suggested answer, though I don't claim to be knowledgeable. When performing gradient descent on a linear model, the error surface is quadratic, with the curvature determined by XXTXXT, where XX is your input. Now the ideal error surface for or gradient descent has the same curvature in all directions (otherwise the step size is too small in some directions and too big in others). Normalising your inputs by rescaling the inputs to mean zero, variance 1 helps and is fast:now the directions along each dimension all have the same curvature, which in turn bounds the curvature in other directions.

The optimal solution would be to sphere/whiten the inputs to each neuron, however this is computationally too expensive. LCN can be justified as an approximate whitening based on the assumption of a high degree of correlation between neighbouring pixels (or channels) So I would claim the benefit is that the error surface is more benign for SGD... A single Learning rate works well across the input dimensions (of each neuron)

(2)在NIPS 2012这篇文章中,提到ReLU可以不需要这个归一化,但为了一般化,仍加上这个使之generalization。效果提高2%(没说明是什么激活函数)

Indeed, there seems no good explanation in a single place. The best is to read the articles from where it comes:

The original AlexNet article explains a bit in Section 3.3:

Krizhevsky, Sutskever, and Hinton, ImageNet Classification with Deep Convolutional Neural Networks, NIPS 2012. www.cs.toronto.edu/~fritz/absps/imagenet.pdf

The exact way of doing this was proposed in (but not much extra info here):

Kevin Jarrett, Koray Kavukcuoglu, Marc’Aurelio Ranzato and Yann LeCun, What is the best Multi-Stage Architecture for Object Recognition?, ICCV 2009. yann.lecun.com/exdb/publis/pdf/jarrett-iccv-09.pdf

It was inspired by computational neuroscience:

S. Lyu and E. Simoncelli. Nonlinear image representation using divisive normalization. CVPR 2008. www.cns.nyu.edu/pub/lcv/lyu08b.pdf . This paper goes deeper into the math, and is in accordance with the answer of seanv507.

[24] N. Pinto, D. D. Cox, and J. J. DiCarlo. Why is real-world vi- sual object recognition hard? PLoS Computational Biology, 2008. http://journals.plos.org/ploscompbiol/article?id=10.1371/journal.pcbi.0040027

本站文章如无特殊说明,均为本站原创,如若转载,请注明出处:caffe中的Local Response Normalization (LRN)有什么用,和激活函数区别 - Python技术站

微信扫一扫

微信扫一扫  支付宝扫一扫

支付宝扫一扫